최소 단어 이상 선택하여야 합니다.

최대 10 단어까지만 선택 가능합니다.

다음과 같은 기능을 한번의 로그인으로 사용 할 수 있습니다.

NTIS 바로가기

다음과 같은 기능을 한번의 로그인으로 사용 할 수 있습니다.

DataON 바로가기

다음과 같은 기능을 한번의 로그인으로 사용 할 수 있습니다.

Edison 바로가기

다음과 같은 기능을 한번의 로그인으로 사용 할 수 있습니다.

Kafe 바로가기

| 주관연구기관 | 연세대학교 Yonsei University |

|---|---|

| 연구책임자 | 손광훈 |

| 참여연구자 | 민동보 , 최종국 |

| 보고서유형 | 최종보고서 |

| 발행국가 | 대한민국 |

| 언어 | 한국어 |

| 발행년월 | 2017-10 |

| 과제시작연도 | 2016 |

| 주관부처 | 미래창조과학부 Ministry of Science, ICT and Future Planning |

| 등록번호 | TRKO202100007080 |

| 과제고유번호 | 1711043023 |

| 사업명 | 디지털콘텐츠원천기술개발 |

| DB 구축일자 | 2021-07-24 |

| 키워드 | 빅데이터.딥러닝.다시점 랜더링.단일 영상 기반 깊이 추정.증강 현실.Big data.Deep learning.Multiview rendering.Single image depth estimation.Augmented reality. |

□ 고품질의 대규모 RGB+D 데이터베이스 구축

• 실내/외 RGB+D 데이터베이스 획득 및 처리 시스템 개발 (연세대, 충남대)

• 실내/외 실제 장면을 포함하는 고품질의 FHD RGB+D 데이터베이스 (총 2,000,000 RGB+D 세트) 구축 (연세대, 충남대, 넷컴솔루션)

• RGB+D 데이터베이스 저장 서버 구축 및 데이터베이스 검색 시스템 개발 (넷컴솔루션)

□ Deep network 기반 단일 영상의 깊이 정보 추정 모델 학습 기술

• Deep network 기반의 전역적/지역적 깊이 정보

□ 고품질의 대규모 RGB+D 데이터베이스 구축

• 실내/외 RGB+D 데이터베이스 획득 및 처리 시스템 개발 (연세대, 충남대)

• 실내/외 실제 장면을 포함하는 고품질의 FHD RGB+D 데이터베이스 (총 2,000,000 RGB+D 세트) 구축 (연세대, 충남대, 넷컴솔루션)

• RGB+D 데이터베이스 저장 서버 구축 및 데이터베이스 검색 시스템 개발 (넷컴솔루션)

□ Deep network 기반 단일 영상의 깊이 정보 추정 모델 학습 기술

• Deep network 기반의 전역적/지역적 깊이 정보 추정 모델 학습 기술 개발 (연세대)

• 학습 모델 기반 단일 영상의 깊이 정보 추정 기술 (연세대)

□ 디바이스 특성 기반의 깊이 영상 보정 기술

• RGB+D 빅데이터 기반 깊이 영상 보정 기술 개발 (연세대)

• 사용자 인터랙션 기반 깊이 영상 보정 기술 개발 (충남대)

(출처 : 요약서 5p)

□ Purpose & Contents

□ Goal: High-quality 2D-to-Multiview contents generation from Large-scale RGB+D Database

□ Research contents:

▪ Construction of high-quality and large-scale RGB+D database

• RGB+D data acquisition system (HW/SW)

• 2,000,000 sets RGB+D database construction

• RG

□ Purpose & Contents

□ Goal: High-quality 2D-to-Multiview contents generation from Large-scale RGB+D Database

□ Research contents:

▪ Construction of high-quality and large-scale RGB+D database

• RGB+D data acquisition system (HW/SW)

• 2,000,000 sets RGB+D database construction

• RGB+D database storage server construction

▪ Single image depth estimation using deep neural networks

• Global and local predictions for predicting 3D structure froma single image

• Single image depth estimation based on learned CNNs

▪ Depth image refinement according to the device characteristics

• Depth image refinement based on large-scale RGB+D database

• Interactive depth image refinement algorithm

□ Results

1. Construction of high-quality and large-scale RGB+D database

□ Development of RGB+D data acquisition system

▪ (Indoor) Development of Kinect v2 RGB-depth camera registration technology

• To get the depth image, we use the Kinect v2 camera

• Registration technology needs to be developed since the color and depth sensors are located in different spatial locations (Fig. 1-1)

• We develop Kinect v2 depth and color registration software using OpenCV and CUDA libray in the Linux environment (Fig. 1-2)

• For the camera calibration, we take a sequence of images of a checkerboard pattern taken from varying positions (Fig. 1-3)

• Coordinate information of each vertex of chessboard pattern in color image is obtained by corner detection and transformed to the IR image coordinates.

• The relation between the two cameras is represented by a 3x3 rotation matrix, which is an external parameter of the camera, and a 3x1 translation vector

(함수)

• 12 equations can be obtained through the actual spacing between the coordinates in the image and the known matching patterns obtained through corner detection. The projection matrix can be found using SVD

• Then, a point of the IR camera can be can be projected onto the coordinate system of the color camera by using the rotation matrix and the translationvector information obtained by the matching process

▪ (Outdoors) Development of ZED stereo camera distortion correction technology

• Camera calibration using stereo image pair (Fig. 1-4)

• We develop stereo camera calibration software using MATLAB

▪ (Outdoors) Development of stereo image correction technology

• Extracting stereo calibration parameters using checkerboard pattern

• We use 7x8 pattern, pattern size 26mm and 7x10 pattern, pattern size 50mm checkerboards for ZED and Wide Baseline (WB) cameras, respectively

□ (Indoor/outdoor) RGB+D database processing algorithms

▪ (Indoor) Kinect v2 depth super-resolution

• We now describes our model for depth upsampling

• Let g be the aligned sparse depth image and I be the intensity image in a vector form

• Then, our objective is to estimate the underlying high-quality depth image u by jointly using I and the latent structure of u.

• Objective Function: we want the final estimate u to be similar to the initially observed data g and be consistent with their neighboring elements. Formally, we minimize an objective function in the form:

(함수)

• where the first and second terms denote the data consistency and regularization terms, respectively

• We introduce the regularity of the underlying depth image by Welsch's function

• The reason of adapting such a function is twofold. First, it belongs to a robust M-estimator, which has some robustness and edge-preserving properties. Second, it smoothly approximates the zero-norm.

• This function saturates as the neighboring pixels become dissimilar and ensures that discontinuities in the depth image are not penalized, minimizingthe depth blurring compared to the convex functions.

• Note that the assumption about the intensity depth coherence is usually achieved by optimizing a quadratic objective function, which transfers intensity information to the depth image directly

• In the following, we introduce a fast algorithm to solve our optimization problem

• The solver is based on variable splitting and constrained optimization techniques

• First, we can find an equivalent formulation as follows

(함수)

• The auxiliary variable v is introduced to separate the calculation of the data consistency term and the nonconvex regularizer

• The rationale behind this formulation is that it may be easy to solve the constrained problem rather than solving its unconstrained counterpart

• We adopt the ADMM iteration for the above equation

(함수)

• where γ is the augmented Lagrangian multiplier, β is the penalty parameter

• The u-subproblem is equivalent to solve the following normal equations

(함수)

• We apply the preconditioned conjugate gradient method to update u

• The previous solution is used as an initial point for the PCG iteration

• Using proximal approximation, the γ-subproblem can be computed as follows:

(함수)

• It is straightforward to see that our model determines the regularity of the output depth image via addition. The structures of intensity and depth images are jointly considered in the operation

• In other worlds, we iteratively compensate the inherent structure discrepancy between two images

• This is a noticeable difference with traditional approaches whose affinity depends on appearances solely

• For example, if nearby two objects share analogous intensity, the edge blurring is frequently occurred in the conventional approaches

• However, our model discourages the influence of the other side in different rage

• Our model does not blur depth discontinuities even when the corresponding intensity image has low-contrast edges

• Also, for the textured region in an intensity image, ourmodel gradually eliminates the perturbation caused by the texture pattern (Fig. 1-5)

▪ (Outdoor) Stereo depth prediction based on Deep-Learning

• For depth estimation, we use MC-CNN algorithm

• MC-CNN accepts a pair of patches as input values

• There are two types of architecture, one is fst (Fast Architecture), and the other is acrt (Accurate Architecture)

• Both architectures have the structure of the Siamese Network that shares weights among the sub-networks

• The sub-network consists of multiple convolution and ReLU layers

• Fst uses hinge loss as loss function, acrt uses cross-entropy loss when training

• A cost volume is generated as a result of the network

• Cost-aggregation is achieved through multiple CBCA(Cross-based Cost Aggregation) algorithm and semi-global matching

• After Cost-aggregation, predict the Disparity map through the WTA (Winner-Takes-All) Algorithm

• Cost-refinement is achieved by the bilateral filter and interpolation

• As shown in the table below, the error difference between fst and acrt is not large, but there is difference of approximately 800 times at runtime(Table 1-1)

• Therefore, this task is thought to be effective in terms of error versus runtime, so the Disparity map is predicted using fst

• Depth can be estimated by dividing the product of focal length and the distance(B) between two cameras by Disparity (d) (Fig. 1-6)

Depth = (FocalLength*B) / d

• To run MC-CNN smoothly, need a Nvidia Titan or Nvidia 1080 graphics card. More than 32GB of RAM is required to quickly process confidence maps and Disparity maps

▪ (Outdoor) Confidence prediction for stereo matching

• It is difficult to obtain the depth map in outdoor environment, thus we use ZED stereo camera to obtain the depth map by using stereo matching methods

• However, the estimation of accurate corresponding pixels between stereo image pairs remains an unsolved problem in particular in the presence of textureless or repeated pattern regions, and occlusions

• In order to address these issues, we propose confidence estimation algorithm for the depth map from stereo matching

• Most of conventional methods use pixel-level confidence features such as median deviation distributions in four different scales, left right difference,maximum likelihood, peak ratio, negative entropy measure

• We aim at improving the performance of the confidence prediction by exploiting spatially coherent confidence features

• Framework of the proposed confidence estimation methods, consisting of confidence feature augmentation (CFA) and hierarchical confidence map aggregation (HCMA) is as follows (Fig. 1-7)

• In testing phase, the augmented confidence feature is built by concatenating the pixel-level confidence feature and the superpixel-level confidence feature (Fig. 1-8)

• We define the reliable pixels for the pixels which have confidence value higher than 0.7 and unreliable pixels for the pixels which have confidence value lower than 0.7 and propagate reliable pixels to unreliable regions

• We evaluate the proposed method compared to conventional learning-based approaches such as Haeulser et al., Sppyropolos et al., and Park et al., anddeep learning-based methods such as Poggi et al. and Seki et al. for Middlebury dataset and KITTI dataset (Fig. 1-9)

• The results show that the proposed confidence estimator exhibits a better performance than conventional per-pixel classifiers and CNN-based approaches

• To verify the robustness of the confidence measures, we refine the disparity map using confidence maps estimated by several confidence measureapproaches including ours and measured an average bad matchingpercentage (BMP) (Fig. 1-10)

• The results show that the erroneous matches are reliably removed using the proposed confidence measure

□ Acquisition of indoor/outdoor RGB+D database

▪ Indoor database

• A variety of indoor scenes recorded by the RGB and depth cameras of the Microsoft Kinect v2 camera are constructed as a video sequence

• Apply registration technology and upsampling technique to exploit our source technology

• 2,000,000 total indoor RGB-D database which consist of raw data, registered depth image, upsampled depth image, and color image obtained from the Kinect v2

• A toolbox which handles database can easily be used with Matlab or other data analysis platforms

• Taking a data by getting a place for diversity of database

• In order to avoid problems such as portrait rights in the data obtained, we got a prior consent and photographs from the individuals appearing on the video.

• 18 places are as follows. warehouse, cafe, classroom, church, computer room, meeting room, library, laboratory, bookstore, corridor, bedroom, livingroom, kitchen, bathroom, restaurant, billiard, hospital, store.

• In the case of bedrooms, living rooms, kitchens, etc., we asked for cooperation from the furniture shops and the flagshop shops. We take an image with a team of three people. Their role are as follows

• First is a photographer taking a picture with a fixed tripod. The second person confirm the shooting situation using a portable computer. Another observer observe the power source and the surrounding shooting environment (Fig. 1-11)

• Set up four situations to obtain depth images at various points of view

✔ static camera -static object

✔ static camera -moving object

✔ moving camera - static object

✔ moving camera - moving object

• In order to efficiently manage the indoor RGB + D database, data were classified into 18 categories (Fig. 1-12)

▪ Outdoor database

• We use ZED and Wide Baseline (WB) cameras for the outdoor database (Fig. 1-13)

• ZED camera: 1920x1080, 1280x720 resolution, depth range 0.5 ~ 20m, and Baseline length 12cm provide

• Wide baseline camera: 1920x1080, 1280x720 resolution, depth range 2 ~ 80m and base line length 40cm provide

• Two ways to shoot : Pictures and videos. taking pictures with the equipment taking pictures of the surrounding environment. The video is divided into three methods in detail: (1) Stop-state shooting equipment and Moving-state object, (2) Moving-state shooting equipment and Stop-state object, (3)

• Moving-state shooting equipment and Moving-state object

• Taking pictures of parks, apartment complexes, field, streams and etc. As for the region, it is photographed in Daejeon, Cheonan and Sejong.

• The number of outdoor images is 800,000. 300,000( Chungnam University 90%, Sejong 7%, others 3%) in the first year and 500,000(Daejeon Yuseong-gu 60%, Seo-gu 10%, Jung-gu 20%, Cheonan 10%) in the second year (Fig. 1-14)

• The shooting program is divided into three. (1) ZED picture shooting program : Developed to be able to shoot using OPENCV. (2) ZED Video recording program : Save the file after collecting information by 500 frames. (3) Wide Baseline picture shooting program: Developed shooting program after synchronizing two camera signals using mvBluefox SDK.

• Image data file and folder name rules(confidence map, depth map, disparity map, rectified image(left, right) and calibration using parameter)

□ Construction of large-capacity RGB+D database storage server

▪ Database server construction

• Diagram showing collaboration of each agency for building RGB + D database server is as follows (Fig. 1-15)

• Yonsei University and Netcom Solution Co., Ltd acquired 1 million indoor RGB+D database

• Yonsei University developed image processing technology related to Kinect v2 camera (Kinect v2 RGB-D camera registration, Kinect v2 depth image super resolution technology)

• Chugnam University and Netcom Solution Co., Ltd acquired 1 million outdoor RGB+D database

• Chugnam University developed image processing technology related to zed camera and wide-baseline stereo camera (stereo camera distortion correction technology, stereo image registration technology, stereo matching reliability measurement technology)

• Based on the RGB+D database (indoor + outdoor), Netcom Solution Co., Ltd constructed final RGB+D database server after processing the data (image processing, category classification, indexing)

• Construction of the RGB + D database server is shown in the figure below. It consists total of three physical servers

✔ Web application server: RGB+D data browser, Viewer (connected to Internet)

✔ Database server: RGB+D data indexing, storage (internal network)

✔ Storage server: RGB+D data I/O management, file service (internal network)

• In order to save the data collected by each research institute efficiently and without any security vulnerability, only the authenticated users can configure the original raw data and the processed data through the web screen (Fig. 1-16)

▪ Development of RGB + D database search system

• Extracts standardized index from the directory name, file name, file attribute, etc. to search / view / utilize RGB + D data and stores it in the DBMS (MSSQL)

▪ RGB+D database release

• In addition to building the RGB + D database server, a separate site was created on the Yonsei University website to promote and use cases. The RGB + Ddatabase was released on this website.

• Homepage address : diml.yonsei.ac.kr/DIML_rgbd_dataset (Fig. 1-17)

• Currently 4000 sample data and 1 million RGB + D raw data are unloaded

• Raw_data is divided into 18 categories and can be downloaded by category as shown in Fig. 1-18

• Raw_data has file format ‘png’ for both color and depth image which are easy to use. Color images are encoded in 8 bits and depth images are encoded in 16 bits to contain a range of depth information

• The current website of RGB + D database was visited by researchers from more than 25 countries from all over the world (as of Oct. 10) (Fig. 1-19)

✔ Korea: 1539 people, Taiwanese 7, Canada 3, Australia 2, UK 3

✔ China: 97 people, Japan 6, Spain 3, Sweden 1, Israel 1

✔ America: 27 people, Pakistan 4, India 2, Germany 1, Philippines 1

✔ Hongkong: 12 people, Singapore 4, New zealand 2, Denmark 1, Poland 1, Russia 1

• When the database has been released, more than 1300 users visited the webpage in less than a month

2. Single image depth estimation using deep neural network

□ Global and local network for single image depth estimation

▪ Global network

• The global network takes a whole image as an input, and predicts an overall depth structure at a global level

• Although the input and output differ in appearance, both are renderings of the same scene

• Thus, structure of the input RGB image is roughly aligned with that of the output depth

• We design the global network under these considerations

• Mnay previous approaches based on the CNNs have used fully connected layers, which fix dimensions of the input and throw away spatial coordinates

• Instead, we use a fully convolutional encoder-decoder architecture that takes the input of arbitrary size and produces results proportional to the size of the input images

• The encoder consists of a series of three 3x3 convolutions and rectified linear unit, followed by 2x2 max-pooling with stride 2 for downsampling

• After each downsampling step, we double the number of feature channels

• We use the first five convolution layers and the following pooling in the VGG architecture

• The decoder progressively enlarges the spatial resolution of convolutional activations through a sequence of deconvolution and convolution layers

• The deconvolution layer is implemented using the transposed convolution and fixed bilinear filter kernel

• There is a great deal of low-level information shared between RGB and depth images, e.g., the location of prominent edges

• Thus, it would be desirable to shuttle this information directly across the network

• To this end, we add skip connections between convolution layers and their symmetric deconvolution layers

• Using such connections boosts the performance and makes training the very deep network easier

• The global network captures the low-frequency structure accurately using a global view of the input, but produces coarse depth image

• In the following, we will address this issue by developing local networks

▪ Local network

• It is well-known in literatures that there is a trade-off between localization accuracy and the use of global context in deep network

• The global network employs a series of convolution and max-pooling layers to robustly estimate the global 3D layout of the scene. The subtle details of the depth image, however, are lost during these processes although we add the skip connections

• We additionally predict depth gradients by providing a local region (RGB-patch) as input

• The key idea is that the local network act as a feature extractor, which preserves the primary depth edges from the input RGB meanwhileeliminating the unwanted oscillation such as textures

• The local network does not use pooling ac it usually discards useful details essential for single image depth estimation

• It consist of 10 convolution layers with 3x3 filters, followed by the ReLU

• Since depth gradients contain both positive and negative values, the ReLU is not used for the last layer

• We use the batch normalization to alleviate the internal covariate shift by normalizing input distributions of every layer to the Gaussian distribution

□ Integration network for final depth estimation

▪ Let f and g be the outputs of global and local networks, respectively.

▪ These complementary outputs are then seamlessly combined at the integration network

▪ Formally, we solve the following variational problem to estimate the final depth image u

(함수)

• where the first term denotes a fidelity term penalizing the difference between the gradient of the estimated depth and g

• The second one represents global prior knowledge about u, so that u becomes close to f

• λ is a constant to balance the two terms, and is also learned in the deep network

• ∇ denotes the gradient operator in the discrete setting

• Minimizing the above functional can be interpreted as the integration of gradient field using the quadratic prior form f

• This formulation has a number of benefits over the simple Poisson reconstruction which exploits depth gradients only

• First, the resulting depth image of Poisson reconstruction has scale ambiguity, and thus should be intentionally re-scaled to a certain range

• In contrast, the proposed method avoids such a problem by introducing a quadratic prior to force coercivity

• Second, we use the L1 norm so as to reduce the influence of outliers that may exist in the estimated gradient field

• The functional is convex, but cannot be minimized in a closed form

• We choose Split Bregman iterations as it guarantees fast convergence

(함수)

• Our key observations are that (i) each computation steps in the SB iterations can be realized by layers of the CNNs and (ii) given a fixed number of iterations, the optimization procedure can be unrolled like the recurrent neural network

• These allow us to use the back-propagation algorithm to train the whole network end-to-end

• In the following, we detail how the individual steps are realized within the deep network

▪ Unrolling procedure

• u-update step

• The u-update step is quadratic and the minimizer satisfies the following normal equations

(함수)

• where ∇ ∙ is the divergence operator, the adjoint of the gradient

• In matrix/vector form, the left-hand side becomes a block Toeplitz matrix, which can be diagonalized by the fast Fourier transform (FFT)

• Therefore, the u-update step can be implemented with three FFT calls and convolution layer that has fixed filter coefficients for the divergence operator

• d-update step

• Regarding the d-update (basis pursuit problem), solutions are obtained by element-wise shrinkage

(함수)

• where shrink is soft-thresholding operator

• It is a composition of element-wise division and max operator, and thus can be implemented with a standard Hinge function

• Finally, the b-update is an element-wise sum of four inputs of the same size

• We show the final and intermediate results from our deep variational model

• The global network predicts the overall depth structure from a global perspective

• The local network eliminates the unwanted oscillation caused by appearance, and extract depth gradients from single image

• It can be seen that the estimated gradient fields still contains high-frequency gradients that do not coincide with depth images, however the integration network robustly suppresses these detects in the final depth prediction (Fig. 2-2)

• The proposed method produces visually more plausible predictions with sharp depth transitions, aligning to RGB details

• We further present results for the KITTI dataset, which consists of outdoor scenes with depths captured by the Velodyne LiDAR

• The Velodyne LiDAR produces sparse depth values for less thatn 20~30% of the pixels in half-resolution

• Aside from this, the depth values are provided only at the bottom part of the RGB image

• Thus, we use our outdoor dataset for generating training data

• The below figure shows examples of predictions on the KITTI dataset (Fig. 2-4)

• The proposed method predict depth at the upper part of the RGB image although they do not exist in the KITTI dataset

3. Depth refinement algorithm according to device characteristics

□ Depth refinement based on RGB+D big-data

▪ Depth refinement using deep neural network

• For the depth refinement, we propose the new approach in which the regularization function and the penalty parameter are learned from a large-scale RGB-D training dataset.

• Different from the point-wise proximal mapping based on the handcrafted regularizer, the proposed method learns and aggregates the mapping through CNNs

• Deeply aggregated alternating minimization for depth image restoration

• Given an observed image f and a balancing parameter λ, we solve the corresponding optimization problem:

(함수)

• D is a discrete implementation of horizontal and vertical derivative. Φ is a regularization function that enforces the output to meet desired statistical properties

• We reformulate the original alternating minimization iterations with the following steps

(함수)

• where v is an auxiliary variable of the AM iteration. NN denotes a convolutional network parameterized by ω

• This formulation allows us to turn the optimization procedure using AM into a cascaded neural network architecture, which can be learned by the standard back-propagation algorithm

• For joint restoration, we adopt the halfway concatenation. Another sub-network is introduced to extract the effective representation of the guidance image, and is then combined with intermediate features of f

(함수)

• Network architecture

• The proposed network consists of deep aggregation network, parameter network, gudidance network, and reconstruction layer (is iterated k-times, followed by the loss layer)

• Deep aggregation network consists of 10 convolutional layers with 3x3 filters

• Each hidden layer of the network has 64 feature maps

• Since v contains both positive and negative values, the rectified linear unit is not used for the last layer

• The input distributions of all convolutional layers are normalized to the standard Gaussian distribution

• We also extract the spatially varying γ by exploiting features from the eighth convolutional layer of the deep aggregation network. The ReLU is used for ensuring the positive values of γ

• For joint image restoration, the guidance network consists of 3 convolutional layers, where the filters operate on 3x3 spatial region

• It takes the guidance image as an input, and extracts a feature map which is then concatenated with the third convolutional layer of the deep aggregation network (Fig. 3-1)

□ Depth refinement based on user interaction (depth image

▪ Simple user interaction input (Fig. 3-2)

▪ Object Segmentation technique

• Distinct object segmentation to be refined

• Design of algorithms based on sparse interpolation

(함수)

▪ Depth refinement algorithm

• Refinement of depth information of area containing error

• Refinement algorithms (Fig. 26)

(함수)

□ Depth refinement based on user interaction (depth video)

▪ Depth video data refine technology

• Expected depth images can cause unexpected errors

• Performing depth information refine through the following series of steps(Fig. 3-4)

▪ Scribble propagation

• A per-frame user scribe is required for object segmentation

• The user can not interact the scribbles

• Scribble propagation work is required for each frame.

• Propagate the scribble using optical flow

• Bounding box for speed up to get optical flow

▪ Object segmentation

• Apply Edge Aware Filter for object segmentation

• Applying EAF to the grayscale of a color image yields a weight for the scribble color distribution

• Can obtain an object-segmentation image by assigning a threshold value to the weight

• Due to the density of the scribble, there may be holes in the object segmentation image

• Expansion and erosion to fill holes

▪ Depth information refinement

• Accurate measurement error based on object segmentation image

• Unlike a single image, the depth information of the object may change depending on the progress of the frame

• There are two way to refine depth video (linear depth refinement and optical flow based refinement)

• The linear depth refinement method assigns depth information to the start and end frames, respectively, and linearly assigns depth information of the object to the m’th frame between them

• The optical flow based refinement method is a user-added input that changes the given point to the same depth as the object to be refined when the video data is processed

• Optical flow-based depth refinement is suitable for objects with irregular depth information

4. Quantitative research achievement

□ Domestic patent: application 5, registration 3

▪ 2D 영상에 대한 깊이 영상 생성 방법 및 장치 (10-2016-0092078, 출원)

▪ 스테레오 매칭을 통한 깊이값의 신뢰도 측정 방법 및 장치 (10-2016-0094478, 출원)

▪ 단일 영상 기반의 외각 시점 합성 방법 및 영상처리 장치 (10-2016-0094697, 출원)

▪ 2D 영상에 대한 깊이 정보 생성 장치 및 방법과 이에 관한 기록 매치 (10-2017-0059112, 출원)

▪ 사용자 인터랙션 정보 기반 깊이 정보 영상 보정 장치 및 방법 (10-2017-0091489, 출원)

▪ 스테레오 카메라 기반의 주행 가능 영역 추정 장치 및 방법과 이에 관한 기록 매체 (10-1717381, 등록)

▪ 2차원 영상의 3차원 변환 장치 및 방법 (10-1692634, 등록)

▪ 스테레오 매칭을 통한 깊이값의 신뢰도 측정 방법 및 장치 (10-1784620, 등록)

□ International patent: application 1

▪ 스테레오 매칭을 통한 깊이값의 신뢰도 측정 방법 및 장치 (KR2017-003888, PCT 출원)

□ International journal: publication 9

▪ Parallax adjust for visual comfort enhancement using the effect of parallax distribution and crosstalk in parallax-barrier auto-stereoscopic 3D display, Optical Engineering, 2015

▪ Non-parametric human segmentation using support vector machine, IEEE Transactions on Consumer Electronic, 2016

▪ Real-time rear obstacle detection using reliable disparity for driver assistance, Expert Systems with Applications, 2016

▪ Structure selective depth super-resolution for RGB-D cameras, IEEE Transactions on Image Processing, 2016

▪ Fast illumination-robust foreground detection using hierarchical distribution map for real-time video surveillance system, Expert Systems with Applications, 2016

▪ Local area transform for cross modal correspondence matching, Pattern Recognition, 2017

▪ Cross-scale cost-aggregation for stereo matching, IEEE Transactions on Circuits and Systems for Video Technology, 2017

▪ Personness estimation for real-time human detection on mobile devices, Expert Systems with Applications, 2017

▪ Robust interactive image segmentation using structure-aware labeling, Expert Systems with Applications, 2017

□ International conference: publication 8

▪ Deep Self-Correlation Descriptor for dense cross-modal correspondence, European Conference on Computer Vision, 2016

▪ Multi-spectral pedestrian detection based on accumulated object proposal with fully convolutional network, IEEE International Conference on Pattern Recognition, 2016

▪ ANCC FLOW: adaptive normalized cross-correlation with evolving guidance aggregation for dense correspondence estimation, IEEE International Conference on Image Processing, 2016

▪ Edge-aware image smoothing using commute time distance, IEEE International Conference on Image Processing, 2016

▪ Point-cut: interactive image segmentation using one-point supervision, Asian Conference on Computer Vision, 2016

▪ Homography flow for dense correspondences, Asia-pacific Signal and Information Processing Association, 2016

▪ Deeply aggregated alternating minimization for image restoration, IEEE International Conference on Computer Vision and Pattern Recognition, 2017

▪ Depth prediction from a single image with conditional adversarial networks, IEEE International Conference on Image Processing, 2017

□ Domestic journal: publication 2

▪ 야외 RGB+D 데이터베이스 구축을 위한 깊이 영상 신뢰도 측정 기법, 멀티미디어학회 논문지,2016

▪ 커널 분해를 통한 고속 2-D 복합 Gabor 필터, 멀티미디어학회 논문지, 2017

□ Technology transfer: 2

▪ 지능형 영상감시시스템을 위한 영상처리 기술, know-how 이전, 4천만원, 2016

▪ 스마트 영상 감시/보안 솔루션을 위한 멀티 모달 영상처리 및 패턴 인식 기술, know-how 이전, 5천만원, 2017

□ Soft-ware registration: 10

▪ 스테레오 매칭에서의 신뢰도 측정 방법 (C-2016-017054)

▪ 고속 전역적 평활화 기법 (C-2016-017129)

▪ 효율적인 깊이 추정을 위한 트랜스폼 기반 정합 기법 (C-2016-017053)

▪ 효율적인 Gabor filtering 구현 기법 (C-2016-018251)

▪ 빠른 전역 필터링 기법 (C-2016-018250)

▪ 스테레오 매칭을 위한 딥러닝 기반의 특징 벡터 가중치 예측 기법 (C-2017-013402)

▪ 대응의 일관성에 기반한 스테레오 매칭의 비지도 학습 기법 (C-2017-013402)

▪ 딥러닝을 이용한 스테레오 매칭의 비용 집합 기법 (C-2017-013427)

▪ 아핀변환 행렬 보간법 (C-2017-015406)

▪ 사용자 인터랙션 기반 빠른 깊이 영상 보정 기법 (C-2017-015405)

□ Expected Contribution

1. Expected Contribution

□ 3D TV, Movie, Contents Industry

▪ Majority of the preexisting 3D contents are manually corrected.

▪ By developing a software which utilizes the ‘high quality 2D-to-multiview contents generation from large-scale RGB+D database’technology, it is possible to build a professional 2D-to-3D conversion platform that convertspreexisting 2D contents to 3D contents.

• By minimizing the manual correction of the 3D contents, it is possible to create low-cost and high-quality 3D contents

▪ Additionally, preexisting 2D contents can be converted to realistic 3D contents using the ‘depth estimation from single image and multiview rending’technology.

▪ Profit can be earned by distributing above technology as a commercial software to enterprises in the industries such as film production and glasses-free multiview TV.

• Currently, above technology is undergoing commercialization after receiptment of letter of technology transfer intent from GFT Technologies Inc

▪ Provide solution for lack of 3D contents in a current trend where glasses-free multiview TV is expected to be released and 3D movie is wide-spread

• Currently, due to the limitation of production technology, 3D contents are limited to animations or documentaries

▪ Technology which converts preexisting 2D contents to multiview 3D contents is a main competitiveness of the glasses-free multiview TV. Therefore, with cooperation of the TV manufacturers, it can be sold with a TV as a package

□ Artificial intelligence industry

▪ Need for detecting human emotion has increased including robotics, marketing, and so on

▪ Existing methods detected emotion from voice, text, or visual data, but they only used one of them

▪ Including the above data, recent works have employed multi-modal data from various sensors for detecting emotion

• For example, thermal and infrared are applied for the algorithms

• Recognition rate and accuracy are increased compared to conventional methods

• Furthermore, they can subdivide the degree of each emotion

▪ Yonsei Univ. and Netcom solution have a plan to commercialization on emotion detection based on multi-modal data (Fig. 1-3)

• Estimated depth from developed monocular depth estimation algorithm can be used for emotion detection

• Using image and multi-modal data processing techniques which are transferred from Yonsei Univ. to Netcom solution, multi-modal database construction is in progress

• Synergy would be expected

• Netcom solution (expert on audio processing) and Yonsei Univ. (expert on image & multi-modal processing) can generate synergy by jointresearch for the commercialization

□ Smart portable device industry

▪ Field of application for smart portable device includes user friendly photo editing technology and augmented reality technology

▪ After estimating depth information from a single image captured by a smart portable device, user can obtain high-quality depth image by editing thedepth image with a touch screen capacity of the device

▪ It is possible to provide a photo editing technology which requires high-quality depth image to a smart device

• It is possible to develop a user-friendly software which minimizes user input by using depth image. (ex. Using touch input of the user to change focus of thephoto or to segment object from a image and synthesizing it to another photo.)

▪ Depth image is required to utilize a realistic and natural augmented reality software

▪ In contrast to a preexisting augmented reality SW which requires a specific marker (ex. Vuforia by Qualcomm), it is possible to naturally render virtual objects that interacts naturally with an actual image by utilizing the depthimage obtained from smart device’s single image

□ 3D printing & modeling industry

▪ Technology can be applied to 3D printing and modeling by estimating depth information from the image captured by a single camera

▪ 3D model can be obtained from ancient arts, statues, and paintings by inferring geometric information using the technology

• In collaboration with museum and exhibition agencies, realistic 3D contents can be provided to an audience

▪ It is possible to develop an application which infers body type using a 3D converted image from a whole body 2D image and distribute to online shopping web-sites

• It is possible to develop diverse applications for online shopping web-sites such as a clothing recommendation to fit individual’s body type

▪ Current 3D printing depends on direct scanning method to obtain 3D geometric information

▪ In contrast, our technology can obtain high-quality 3D information from a 2D image. Therefore, it is possible to 3D print an object that cannot bescanned directly

• Moreover, in collaboration with 3D printing companies, a technology which produces a prototype before production of an actual product using direct scanning can be transferred

□ Smart automobile industry

▪ Current smart automobile industries focus mainly on developing self-driving automobiles

▪ Google, a front-runner of the smart automobile industry, succeeded in unmanned self-driving which utilized multiple sensors such as color camera, laser, and lidar. However, this system cannot be readily applied to commonvehicles due to its high cost

• It is possible to detect drivable region by obtaining depth information using multiple sensors

• Depth information obtained from this system helps detecting objects such as pedestrians and vehicles which is directly related to safety. Therefore, it is possible compose a highly reliable system

• However, these sensors are expensive and limited in resolution

▪ Therefore, it is possible to construct a system which estimates depth information and detects drivable region by utilizing this project’s ‘high quality 2D-to-multiview contents generation from large-sclae rgb+ddatabase’ technology

• By obtaining high resolution depth information from a single color camera, both accuracy and competitiveness are achieved

• By using a single camera, installation space and maintenance cost in case of malfunction can be minimized

▪ By converting this system as a commercial program, profit can be obtained by selling the system to car manufacturers or individual consumers

2. Expected Effects

□ [Expected effect: technical aspect] Possibility for suppling as a foundation technology within diverse 3D industry

▪ By estimating depth image from an image obtained by a single camera without depth sensor, it is possible to substitute additional hardwares in the field of 3D-TV contents, 3D printing, smart device, and automobile

▪ Moreover, it is expected to accelerate above mentioned field’s technology development and related industry’s growth

□ [Expected effect: technical aspect] Secure a foundation technology related to research areas in computer vision and graphics

▪ It is possible to secure a foundation technology among research areas in computer vision (scene understanding, object detection and tracking, activity sensing, etc.) and graphics (high-speed image processing) which alreadyutilizes depth information

□ [Expected effect: economical ․ industrial aspect] Increase in demand as a result of vitalization of a 3D industry

▪ Depth information estimating technology is a foundation technology of a 3D industry which draws huge demand across broad fields such as 3D TV, movie, printing, automobile, medical, aviation, and art

▪ Therefore, successful development of the proposed technology is expected to vitalize 3D industry, resulting in increase of demand

□ [Expected effect: economical ․ industrial aspect] Expansion of a preexisting 3D industry

▪ Proposed technology of this project can be commercialized and reflects state-of-the-art research trend. Therefore, it is able to coexist with the technologies (3D shooting, 3D scanning) released from preexisting 3D industries

▪ Therefore, upon successful development of the proposed technology, it is expected to revitalize existing 3D market. Also, the technology can beutilized on majority of the 3D market

▪ In 2021, 3D market worth of 80 billion USD is expected. Assuming 15% substitution of the market, 12 billion USD of demand can be expected by 2021

(출처 : SUMMARY 34p)

표

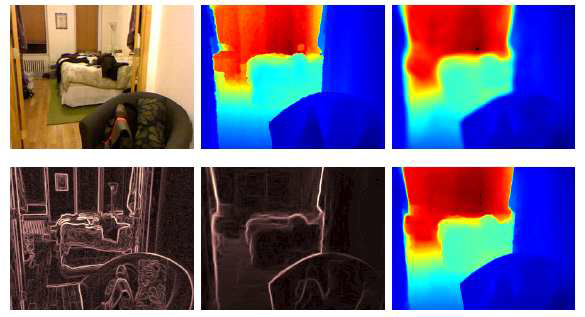

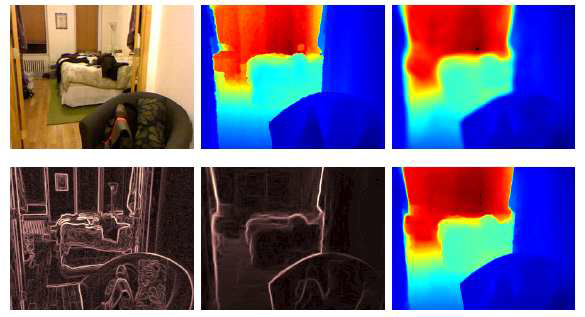

개발된 단일 입력 기반 깊이 추정 기술: 왼쪽 위부터 차례대로 입력 컬러 영상, Kinect 카메라로 획득한 깊이 영상, global network output, 컬러 영상의 gradient, local network output, 그리고 추정된 최종 깊이 영상. Local network는 입력 컬러 영상으로부터 불필요한 texture oscillation을 제거해서 gradient domain의 깊이 정보를 추론함. Integration network는 global-local network의 결과를 융합하여 최종 깊이 영상을 출력함

표

개발된 단일 입력 기반 깊이 추정 기술: 왼쪽 위부터 차례대로 입력 컬러 영상, Kinect 카메라로 획득한 깊이 영상, global network output, 컬러 영상의 gradient, local network output, 그리고 추정된 최종 깊이 영상. Local network는 입력 컬러 영상으로부터 불필요한 texture oscillation을 제거해서 gradient domain의 깊이 정보를 추론함. Integration network는 global-local network의 결과를 융합하여 최종 깊이 영상을 출력함

표

개발된 단일 입력 기반 깊이 추정 기술: 왼쪽 위부터 차례대로 입력 컬러 영상, Kinect 카메라로 획득한 깊이 영상, global network output, 컬러 영상의 gradient, local network output, 그리고 추정된 최종 깊이 영상. Local network는 입력 컬러 영상으로부터 불필요한 texture oscillation을 제거해서 gradient domain의 깊이 정보를 추론함. Integration network는 global-local network의 결과를 융합하여 최종 깊이 영상을 출력함

표

개발된 단일 입력 기반 깊이 추정 기술: 왼쪽 위부터 차례대로 입력 컬러 영상, Kinect 카메라로 획득한 깊이 영상, global network output, 컬러 영상의 gradient, local network output, 그리고 추정된 최종 깊이 영상. Local network는 입력 컬러 영상으로부터 불필요한 texture oscillation을 제거해서 gradient domain의 깊이 정보를 추론함. Integration network는 global-local network의 결과를 융합하여 최종 깊이 영상을 출력함

| 과제명(ProjectTitle) : | - |

|---|---|

| 연구책임자(Manager) : | - |

| 과제기간(DetailSeriesProject) : | - |

| 총연구비 (DetailSeriesProject) : | - |

| 키워드(keyword) : | - |

| 과제수행기간(LeadAgency) : | - |

| 연구목표(Goal) : | - |

| 연구내용(Abstract) : | - |

| 기대효과(Effect) : | - |

Copyright KISTI. All Rights Reserved.

※ AI-Helper는 부적절한 답변을 할 수 있습니다.